You may have heard the term “3D rendering”, but what exactly is it? And how does it work?

In the early 1960s, Ivan Sutherland – known as the “Father of computer graphics” – invented Sketchpad, the first 3D modeling program. Since then and following a boom in the ’90s, 3D computer graphics programs have been and continue to be released or are consistently updated.

As their name implies, these programs allow the user to carry out 3D-related workflows, and 3D rendering is, in a nutshell, the part of that software that allows them to see that result in a 2D screen.

3D rendering has been and continues to be a revolutionary technology that allows us to see and experience the “digital” world like never before.

In an ever increasing era of digital entertainment, 3D rendering has been in constant evolution, and in this article we’ll look at its different types, its use cases, and the software available that you can work with.

So without further ado, let’s get started!

What Is 3D Rendering?

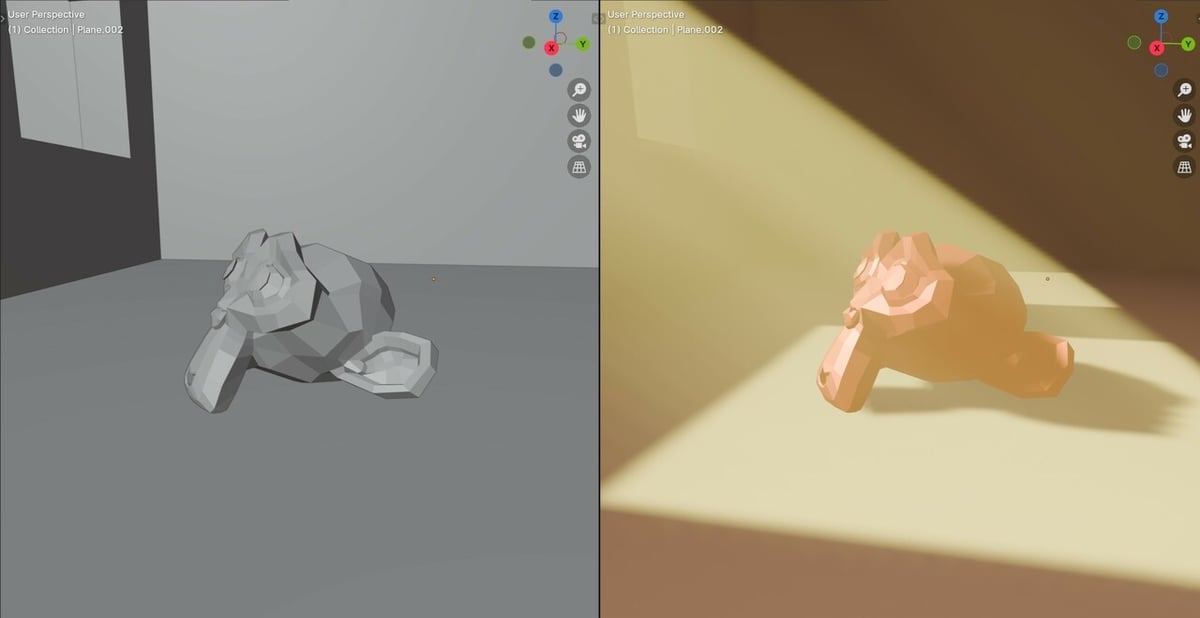

Although at first it may sound complex, 3D rendering is essentially the process of creating 2D images from a 3D model using specialized software. This means that a 3D model, anything from a house to a cup or even a character, appears as if “virtually photographed” in two dimensions (while looking fully dimensional).

Getting a bit more technical, 3D rendering is done by running specialized software, which we will shortly look into, that either uses the GPU, CPU, or both to create renders. Additionally, rendering applications are resource-hungry programs. For a quicker render, additional upgrades are often needed. Processor speed, graphics card integration and compatibility, driver compatibility, RAM, are among the many aspects that enable fast, high-quality rendering.

Given this can be quite a heavy workload, professional artists or studios will usually have really powerful hardware that will allow them to render more complex scenes in shorter amounts of time.

The rendering itself is achieved by simulating how light interacts with different parts of the scene, which in turn projects an image into a virtual camera. The way these light simulations are calculated varies depending on the type of rendering used, and we will be going through them further below.

What Is It Used for?

In a digital era, 3D rendering is used in many applications and industries, from design to entertainment. Here are a few examples:

- Games: Pretty much all 3D games work by rendering a 3D scene into a 2D screen, whether it is to be seen via a monitor or a VR headset.

- Architecture: Concepts and high quality previews of buildings are done by creating 3D renders of the buildings to be made, and there are specialized companies, such as Render4Tomorow and DBox, that work on this.

- Film and animation: Films containing computer graphics (CG) or other 3D assets will need to be rendered first before being composited into the footage. Whether we look at small indie studios or large companies such as Pixar or DreamWorks, they make use of 3D rendering for their productions.

- Product design: Rendering can be used to visualize concepts and finished versions of products that have not yet been manufactured. Similar to film studios, many companies such as Apple, Samsung, and Tesla use 3D rendering to display concepts of their products.

Having looked at where you might come across renders, we’ll go over the different types of 3D rendering.

Types of 3D Rendering

Throughout the years, 3D rendering has evolved and new technologies have been developed to create faster and more realistic renders. Nowadays, there are two main types of 3D rendering, which can make use of different rendering techniques.

Real-Time

This is most commonly found in games and scenarios where an object or scene has to be rendered in real time. By “real-time”, we’re referring to having each 2D image rendered in less than a second, with speeds being measured in “frames per second” (FPS), which can range from 30 to over 100 in certain scenarios.

One of the most common examples of real-time rendering is related to videogames. Imagine you’re controlling a character who is moving around a room. As the view shifts to reveal new or more detailed parts of said room, real-time rendering allows us to see the world around us in an almost instant fashion (as long as your hardware is capable enough).

Pre-Rendering

Pre-rendering, also known as “offline rendering”, is a slower type of rendering, where each frame can take minutes if not hours to render, depending on its complexity. This is more commonly found in films and animation, where quality is more important than speed.

Films from the Avatar, Star Wars, and Dune universes, among many others, make use of 3D rendering to add backgrounds, props, or other effects that would be really hard or straight out impossible to do in real life. Whereas other films, such as the Toy Story and Cars entries have been made entirely using 3D rendering.

That’s not to say that video games can’t be pre-rendered; the Resident Evil and Final Fantasy franchises used pre-rendered backgrounds.

Because of the nature of this type of rendering, it requires a lot more computing power than real-time rendering. But how is the rendering actually done?

Rendering Techniques

There are a few types of “rendering techniques” that determine how a scene is rendered.

Rasterization

Rasterization works by treating the model as a mesh of polygons that have vertices embedded with information such as position, texture, and color. These vertices are then projected onto a plane normal to the perspective (i.e. the camera). With the vertices acting like borders, the remaining pixels are filled with the right colors. Imagine painting by first having an outline for every color you paint – that’s rendering via rasterization.

Rasterization is a fast form of rendering that’s especially useful for real-time rendering (e.g. computer games). It has been further improved by higher resolution and anti-aliasing, a process used to smoothen the edges of objects and blend them into surrounding pixels, although it may still lack realism when compared to other techniques.

Ray Casting

Rasterization encounters issues when overlapping objects are present: If surfaces overlap, the last one drawn will be reflected in the render, causing the wrong object to be rendered. The solution to this eventually led to the development of ray casting. This technique casts rays onto the scene from the camera, then detects intersections with objects to determine the rendered pixel color. The surface it hits first will be shown in the render, and any other intersection after a first surface will not be rendered. It’s more accurate than rasterization rendering but also more computationally intensive.

Ray Tracing

Somewhat similar to ray casting, ray tracing simulates the behavior of light by tracing rays into a scene, taking account reflections, refractions, shadows, and more. For example, if the surface is a reflective one, the resulting reflection ray will be emitted at an angle and will illuminate any other surface it hits, which will further emit another set of rays. For this reason, this technique is also known as recursive ray tracing. For a transparent surface, a refractive ray is emitted once the surface is hit by the secondary ray. It produces highly realistic renders with accurate illumination, but requires significant computational power and is slower than the two previous techniques.

Texture/Bump Mapping

This technique creates a 3D effect on an otherwise flat surface, giving the illusion of “height” for textured models. It’s usually found together with ray traced renders, and is meant to add more realism to an object or background.

3D Rendering Engines

Knowing the different types of 3D rendering and their use cases, it’s time we look at the different types of engines that make this possible.

3D rendering engines are essentially the part of 3D software and games that make use of your PC’s hardware, turning scenes, characters, environments, and more into a 2D image using the previously mentioned rendering techniques. This can then be displayed in real time as is the case with most games, or exported and composited together for films and artistic workloads.

Some of the most famous ones are:

- Arnold: Developed by Autodesk, used in Maya, Cinema 4D, and Houdini.

- Unreal Engine: Developed by Epic games, used in its own Unreal Engine software, 3dsMax, and Cinema 4D.

- Octane: Developed by Refractive Software LTD, it has its own software but can be used in combination with Rhino, SketchUp, and Cinema 4D.

- Redshift: Developed by Redshift and now a subsidiary of Maxon, can be used in Blender, Katana, and Cinema 4D.

- Eevee: Eevee is one of Blender’s new additions in render engines, becoming quite popular as it basically brought real-time rendering to Blender.

- Cycles: Finishing off the list with Blender’s Cycles render, only available inside of Blender but still a great and free option.

Remember that these are 3D rendering engines as opposed to software, which we will also look into next. As specified above, these can be put together with their own proprietary program or can be used by a variety of other different software.

3D Rendering Software

To finish off, we’ll look at some of the best 3D rendering software, which also include some of the previously mentioned rendering engines.

Blender

Blender is free, open-source 3D software that can be used for a variety of applications, including rendering. It is a great option for beginners and, best of all, it is completely free. Blender is also one of our favorite options for plenty of other tasks – you can find guides on modeling, animation, and plenty more tutorials about it. Not just that, but Blender comes with three different render engines, Cycles, Eevee, and Workbench for AMD GPUs. While Cycles, the original option, arguably provides the best quality, Eevee has become quite popular and it offers some amazing and super fast renders, especially for stylized scenes.

Maya

Developed by Autodesk, Maya is the industry standard for 3D software when it comes to industry level production. Unlike Blender, it’s not free – subscriptions are ~€280/month or ~€2,250/year. Maya has been used for certain popular movies such as Monsters, Inc., The Matrix, and Avatar, among many others. While plenty of users will be more than happy using Blender, as it is able to do pretty much everything that Maya does and at a very respectable quality, those with more experience looking to make the most out of their work, might find Maya to be a more suitable solution.

Cinema4D

Cinema4D has been developed by Maxon and, similar to Maya, it’s meant for professionals as annual subscriptions range from €1,010-€1,350 and monthly plans are €128-€170. That said, it’s still a very powerful 3D rendering option and purchasing it will also give you access to their proprietary Redshift render engine. Unlike the previous option, it hasn’t been used for any major movies or projects, but rather mainly featured in freelancer artist’s works.

3dsMax

Also developed by Autodesk, 3dsMax is very similar to Maya but is more focused at architectural, engineering, and product design, whereas Maya is better suited for animators. Just like Maya, it comes at the same subscription plans of ~€280/month or ~€2,250/year. Even if its target or main audience isn’t necessarily the entertainment industry, 3dsMax has also been used in the creation of many movies such as X-Men, Super 8, Spider-Man 3, Lara Croft: Tomb Raider, and the list continues…

License: The text of "What Is 3D Rendering? Simply Explained" by All3DP is licensed under a Creative Commons Attribution 4.0 International License.